Major core upgrade delivering faster runs, higher reliability, and shared context.

What’s new

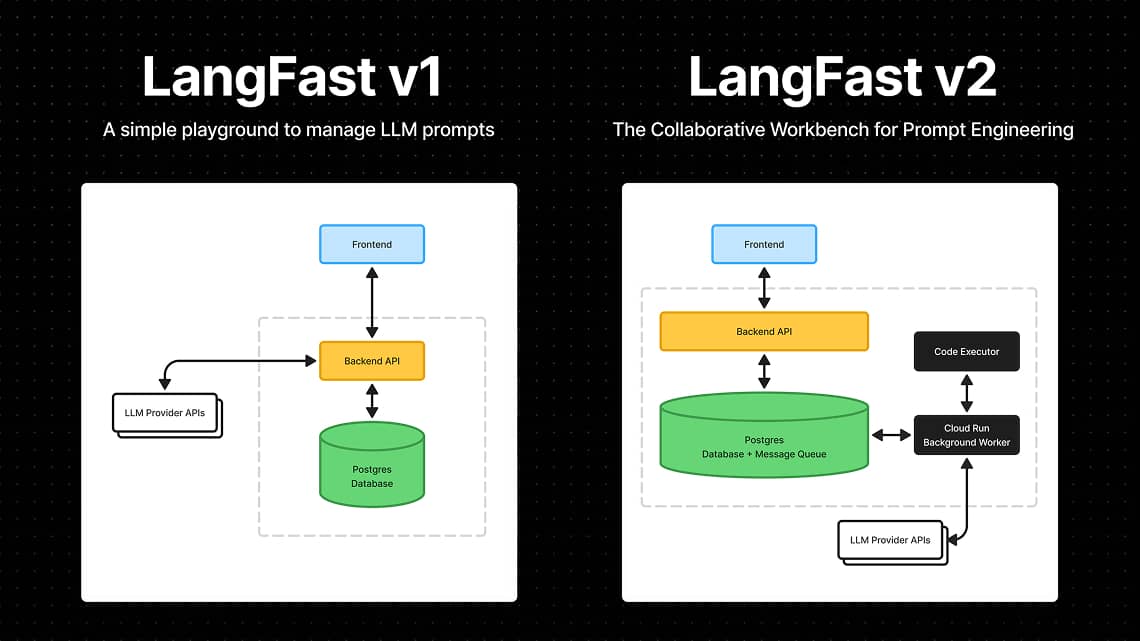

- Async runs — LLM API calls now execute asynchronously in a dedicated Cloud Run worker

- Realtime streaming — LLM response chunks stream to the web app over WebSockets for near-instant feedback

- Run history (foundation) — Every prompt run and result is persisted; latest run is visible now, full history browser coming soon

Why it matters

- Faster perceived responses, fewer timeouts, more stability

- Reproducible LLM outputs with shared context

How to use

- Run a prompt as usual

- Watch tokens stream live

- Refresh or switch file tabs anytime — execution logs persist

© 2026 LangFast. All rights reserved. Privacy Policy. Terms of Service.