Pay once, use forever

No subscription, no hidden fees. Just a one-time payment for lifetime access.Key benefits

- Remove ads / popups

- 150+ AI models (BYOK)

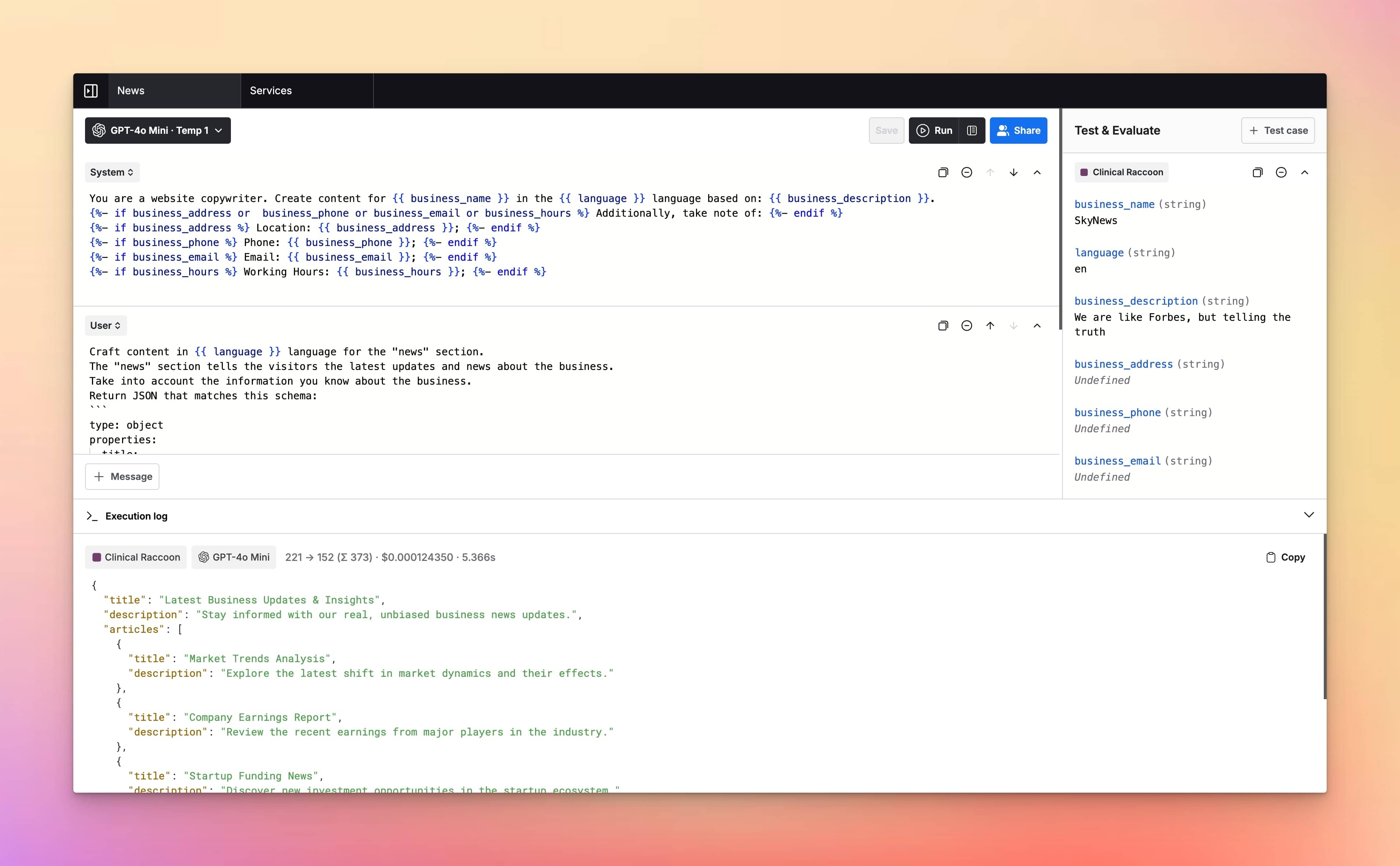

- AI Playground & Chats

- Prompt management

- Prompt evaluations

- Variables & Templates

- Share links & Collaboration

- 1 GB Storage included

Lifetime Access

+ Free updates and access to new features

Explore All Features

Supported AI Models

- GPT-5

- GPT-5 Mini

- GPT-5 Nano

- GPT-5 Nano

- GPT-4.1

- GPT-4.1 Mini

- GPT-4.1 Nano

- GPT-4o

- GPT-4o Mini

- O1

- O1 Mini

- O3

- O3 Mini

- O4 Mini

- GPT-4 Turbo

- GPT-3.5 Turbo

- Claude AI Models (soon)

- Gemini AI Models (soon)

- Model Fine-tuning (soon)

Model configuration

- Custom System Instructions

- Reasoning Effort Control

- Stream Response Control

- Temperature Control

- Presence & Frequency Penalty

User Interface

- Customizable Workspace

- Wide Screen Support

- Hotkey & Shortcuts

- Voice Input (soon)

- Text-to-Speech (soon)

Playground Experience

- Prompt Library

- Prompt Templates & Variables

- Jinja2 Templates Support

- Upload Documents (soon)

- Language Output Control

- Parallel Chat Support

Prompt Management

- Prompt Folders

- Edit & Fork Prompts

- Prompt Versioning

- Upload Documents (soon)

- Share Prompts

Cost & Performance

- Cost estimation

- Token usage tracking

- Context length indicator

- Max token settings

Security and Privacy

- Private by Default

- API Tokens Cost Estimation

- No chats used for training

- Web Search & Live Data (soon)

Integrations

Plugins

- Custom Plugins (soon)

- Image search plugin (soon)

- Dall-E 3 (soon)

- Web page reader (soon)

Meet LangFast users

LangFast empowers hundreds of people to test and iterate on their prompts faster.

Frequently Asked Questions

What is LangFast?

LangFast is an online LLM playground for rapid testing and evaluation of prompts. You can run prompt tests across multiple models, compare responses side-by-side, debug results, and iterate on prompts in one place with your own API keys.

How does LangFast work?

Type a prompt and stream a response, then switch or compare models side-by-side; you can save/share a link, use Jinja2 templates or variables, and create as many test cases as you want.

Which large language models can I try on LangFast?

Currently LangFast is limited to OpenAI/GPT models only. If you need access to models from other providers, just let us know, and we'll add them.

Do I need an API key?

Yes. You need to provide your own API keys to run models on LangFast. API keys are sent over secure transport to the backend and stored encrypted server-side. The plaintext value is used only for provider calls and is not exposed back to your browser.

How fast is LangFast?

We stream tokens through a tiny proxy layer for low-latency responses with your API keys. Typical first token time is low fraction of a second. Speed varies by model/load.

What's the context window?

Depends on the model (e.g., 8K–200K tokens). We show it next to each model.

Can I upload files or images?

Yes, as long as they are supported by the model itself.

Can I compare models side-by-side?

Yes. You can open as many chat tabs as you want to see multiple models answer the same prompt.

Can I save or share chats?

Yes. Use "Share" button to manage sharing permissions. You can create public URLs or share access with specific email addresses.

Where is my data processed?

We route to model providers; see the Data & Privacy page for regions and details.

Can I use outputs commercially?

Generally yes, subject to each model's terms. We link those on the model picker.

Can I bring my own API keys?

Yes, you can. Just let us know, and we'll add them to your workspace.

Does LangFast have an API?

Yes. Reach out to us to get more information.

What's the difference between LangFast and paid LLM APIs?

LangFast is point-and-click for quick evaluation, while paid LLM APIs provide programmatic control, higher throughput, predictable limits, and SLAs for production. Use LangFast to find the right prompt-to-model setup, then ship with APIs.

How does LangFast compare to OpenAI Playground or Hugging Face Spaces?

LangFast focuses on instant multi-provider testing with your API keys, consistent UI, side-by-side comparisons, share links, and exports in one place, offering a streamlined alternative to OpenAI Playground and Hugging Face Spaces.