Mistral Next Playground for Prompt Testing

Try Mistral Next, test prompts side-by-side across models, and measure quality, cost, and latency.

Bring your API keys. Pay once, use forever.

Best Mistral Next Playground

Try quickly

Test a “next” model without setup friction.

Compare behavior

Benchmark against stable models and alternatives.

Use variables

Repeatable evaluation with templates.

Share & export

Links, transcripts, export.

Private by default

We don’t train on your data.

Instant access

Bring your API keys. Start testing immediately.

Why Us over other LLM Playgrounds

Other playgroundsFrom VC-baked companies

Mistral Next PlaygroundPowered byLangFast

Explore All Features

Supported AI Models

- GPT-5

- GPT-5 Mini

- GPT-5 Nano

- GPT-5 Nano

- GPT-4.1

- GPT-4.1 Mini

- GPT-4.1 Nano

- GPT-4o

- GPT-4o Mini

- O1

- O1 Mini

- O3

- O3 Mini

- O4 Mini

- GPT-4 Turbo

- GPT-3.5 Turbo

- Claude AI Models (soon)

- Gemini AI Models (soon)

- Model Fine-tuning (soon)

Model configuration

- Custom System Instructions

- Reasoning Effort Control

- Stream Response Control

- Temperature Control

- Presence & Frequency Penalty

User Interface

- Customizable Workspace

- Wide Screen Support

- Hotkey & Shortcuts

- Voice Input (soon)

- Text-to-Speech (soon)

Playground Experience

- Prompt Library

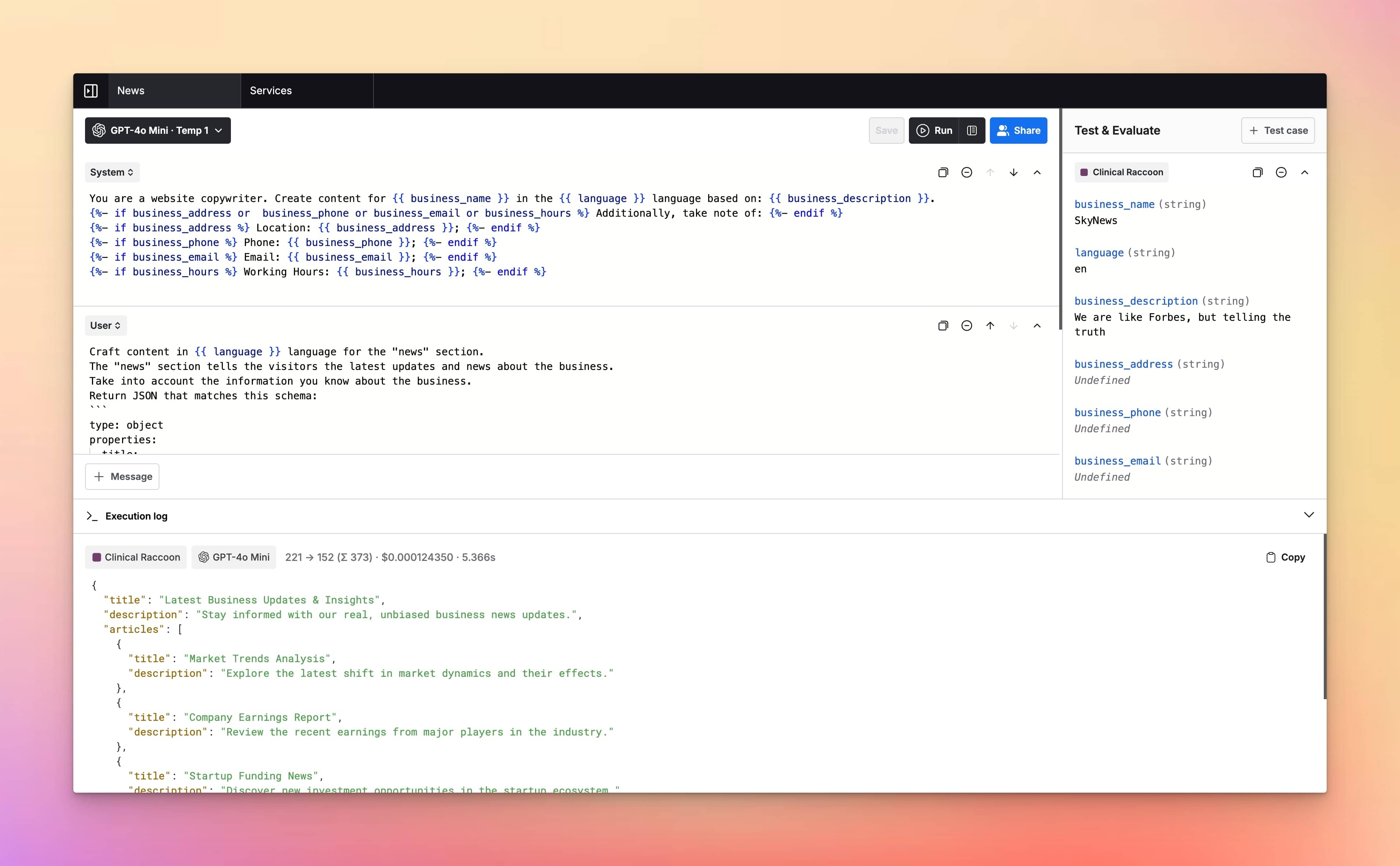

- Prompt Templates & Variables

- Jinja2 Templates Support

- Upload Documents (soon)

- Language Output Control

- Parallel Chat Support

Prompt Management

- Prompt Folders

- Edit & Fork Prompts

- Prompt Versioning

- Upload Documents (soon)

- Share Prompts

Cost & Performance

- Cost estimation

- Token usage tracking

- Context length indicator

- Max token settings

Security and Privacy

- Private by Default

- API Tokens Cost Estimation

- No chats used for training

- Web Search & Live Data (soon)

Integrations

Plugins

- Custom Plugins (soon)

- Image search plugin (soon)

- Dall-E 3 (soon)

- Web page reader (soon)

Meet LangFast users

LangFast empowers hundreds of people to test and iterate on their prompts faster.

Frequently Asked Questions

What is a Mistral Next playground?

This Mistral Next playground is a UI for prompt testing and evals on a preview/early model—so you can measure behavior changes before adopting it in production.

What is this page optimized for?

Pre-adoption evaluation: regression tests, side-by-side comparisons with the stable model you ship today, and quick checks for formatting and instruction-following.

Do I need an API key?

Yes. Bring your API keys. LangFast routes requests through our proxy.

Why require signup for a preview model page?

To keep the playground stable (abuse prevention + rate limits) and to let you save runs, compare deltas, and share results with your team.

How should I evaluate a preview model?

Use a fixed regression suite: your strictest formatting prompts, your most failure-prone edge cases, and your “must-pass” examples from production.

What are the biggest risks with preview models?

Behavior drift: outputs may change between updates. This is exactly why you should run repeatable eval prompts before switching.

Can I compare Mistral Next with the stable model version?

Yes. Run the same prompt set side-by-side and inspect differences in quality, safety behavior, and formatting compliance.

Can I quantify improvements or regressions?

Yes. Use rubric scoring (clarity, correctness, structure) and track pass/fail on strict format checks to see if it’s truly better.

Can I test structured output formats like JSON or schemas?

Yes. Preview models can break formatting unexpectedly—use eval prompts that enforce your output contract.

Can I run multi-turn tests?

Yes. If your product relies on multi-turn behavior, create a repeatable conversation script and rerun it across versions.

Can I use variables/templates for realistic inputs?

Yes. Inject real examples (tickets, policies, product data) so you’re not benchmarking on toy prompts.

Can I export calls for engineering?

Yes—export to cURL/JS/JSON so engineering can reproduce the exact request and parameters.

Can I save/share results?

Yes. Save runs for auditability and share links for review or rollout decisions.

Is it free?

LangFast is free to use with some basic features. You need to provide your own API keys to run models and use the app. When you add your API keys, you pay the model provider (e.g., OpenAI) for the credits/tokens you use. LangFast premium features can be unlocked with a one-time purchase.

What happens after I hit limits?

Wait for reset or add paid usage to keep running preview evaluations.

How fast is it?

We stream responses through a lightweight proxy. Speed depends on Mistral Next and load; compare latency across models directly.

Do you train on my prompts?

No. We don’t train on your prompts or data. Sharing is opt-in and retention is configurable.

Where is my data processed?

Requests route to model providers. See the Data & Privacy page for processing regions and details.

How does this compare to LangChain?

LangChain helps you build systems. LangFast helps you evaluate preview model behavior before you implement or migrate.

How does this compare to Langfuse, Basalt, or PromptLayer?

Those tools manage datasets and evals inside pipelines. LangFast is the quickest way to run interactive regression tests and compare preview vs stable models.